Testing the limits of logical reasoning in neural and hybrid models

Published in Annual Conference of the North American Chapter of the Association for Computational Linguistics, 2024

We study the ability of neural and hybrid models to generalize logical reasoning patterns. We created a series of tests for analyzing various aspects of generalization in the context of language and reasoning, focusing on compositionality and recursiveness. We used them to study the syllogistic logic in hybrid models, where the network assists in premise selection. We analyzed feed-forward, recurrent, convolutional, and transformer architectures. Our experiments demonstrate that even though the models can capture elementary aspects of the meaning of logical terms, they learn to generalize logical reasoning only to a limited degree.

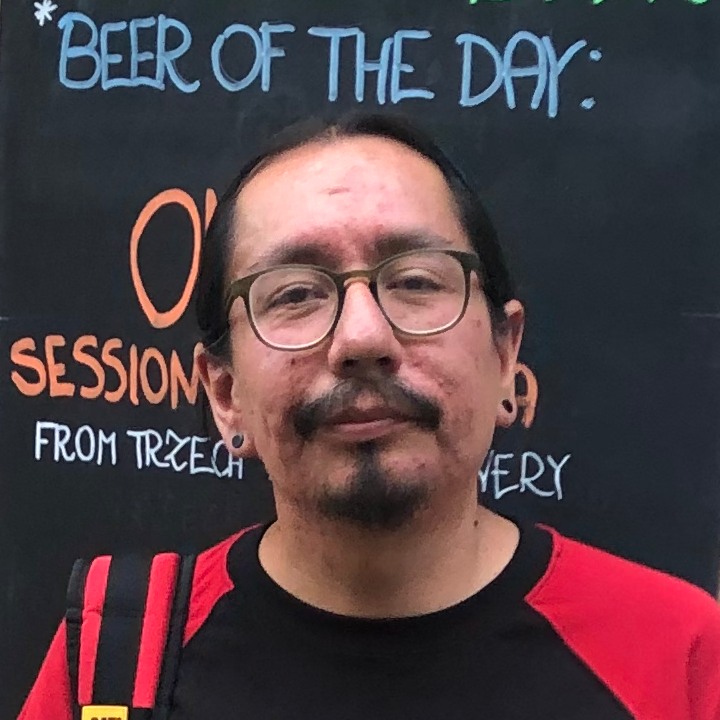

Recommended citation: Manuel Vargas Guzmán, Jakub Szymanik, and Maciej Malicki (2024). "Testing the limits of logical reasoning in neural and hybrid models." Findings of the Association for Computational Linguistics: NAACL. Pages 2267–2279.

Download Paper